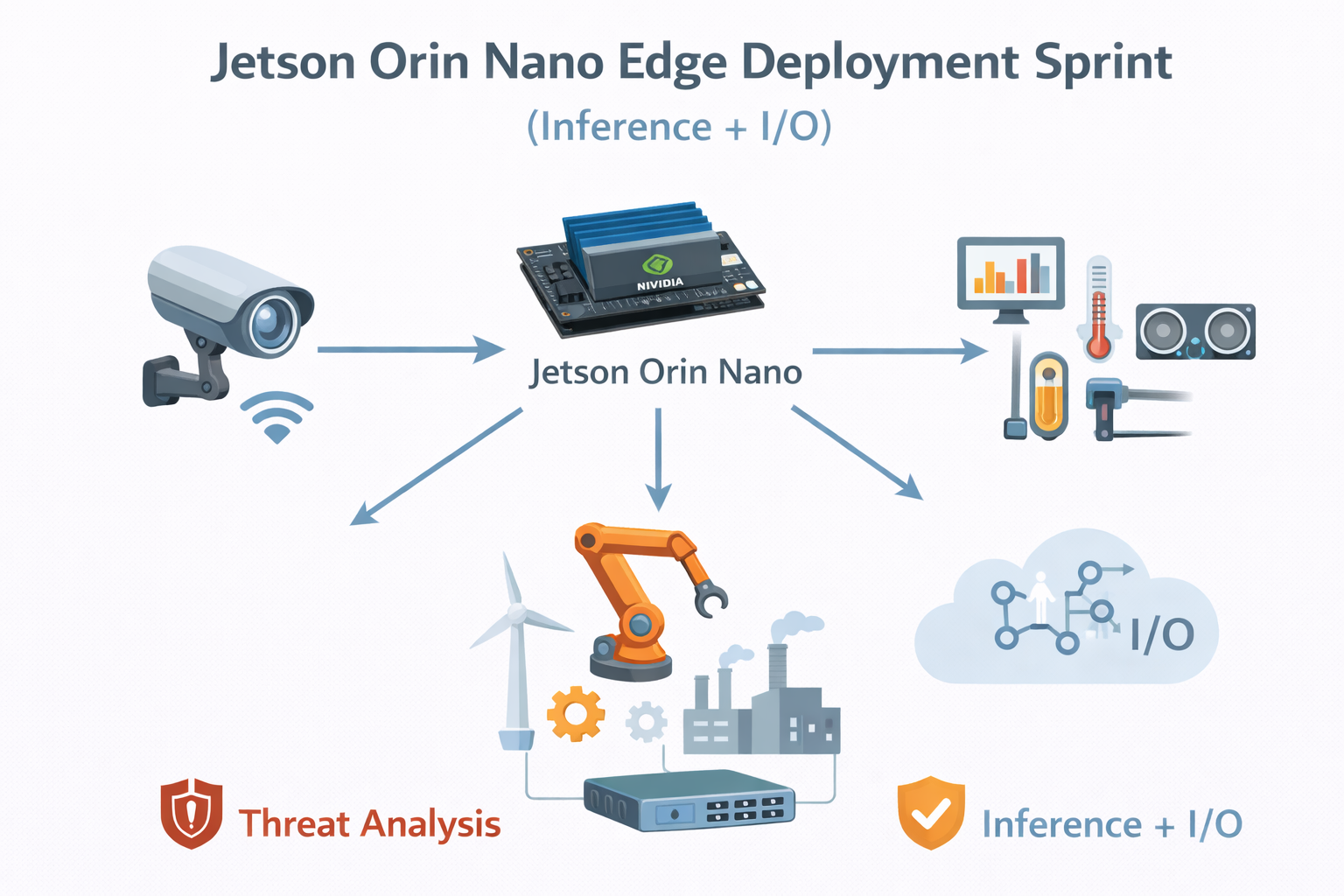

Jetson Orin Nano Edge Deployment Sprint (Inference + I/O)

$0.00

Deploy a production-ready inference service on Jetson Orin Nano with camera or sensor I/O, performance tuning, and a repeatable, restart-safe setup.

Description

This sprint is for startups, R&D teams, and product companies building edge-AI products on NVIDIA Jetson Orin Nano that need a reliable, deployable inference stack, not just a demo notebook.

If you already have a trained model and hardware in hand, this service turns it into a run-on-boot, observable, and maintainable edge service.

What You Get

✔ Inference deployment setup

Production-ready inference service configured using containers or systemd, depending on your deployment needs.

✔ I/O integration

Camera, serial, GPIO, or network data paths wired into the inference pipeline.

✔ Performance profiling & tuning notes

Baseline measurements with clear actions taken or recommended (CPU/GPU usage, memory, FPS/latency where applicable).

✔ Handover-ready documentation

Clear guide covering build, run, restart, logging, and update procedures.

✔ Basic health checks

Startup validation and sanity checks to confirm the service is running correctly after reboot or restart.

Scope (What’s Included)

-

Jetson Orin Nano (Jetpack-based Embedded Linux)

-

Single inference pipeline integration

-

Camera or sensor I/O (USB, CSI, serial, or network-based)

-

Containerized or system-managed service

-

Deployment focused on stability and repeatability

Model training, dataset preparation, and large multi-pipeline orchestration are outside this sprint and handled separately.